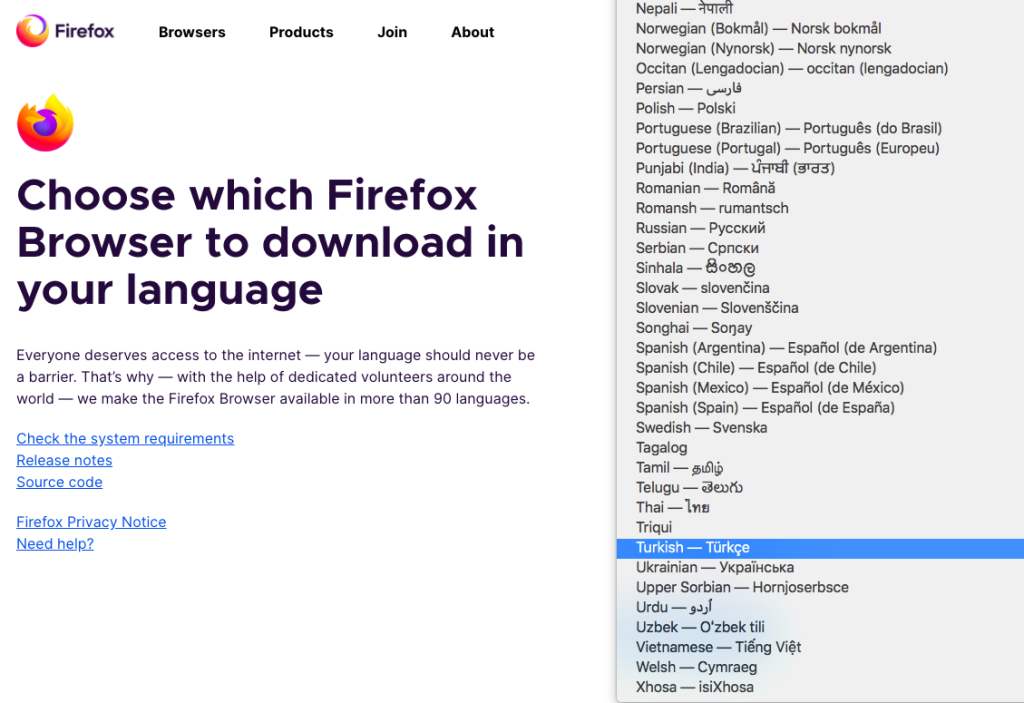

How many languages does it take for a localization program to make an impact? Often, it depends on who you ask, and what their priorities are. One of the people who always comes to mind when I think of a long list of user interface (UI) languages is Jeff Beatty at Mozilla. After all, Firefox is offered in more languages than can even fit in a standard screenshot, as you can appreciate below.

We tend to have many curiosities about localizing software products into less common languages. What does it feel like to know you made an outsized impact on a smaller language community? How did they build a business case to add a language like Welsh? Was localizing into Triqui… er, tricky?

Here is an interview between myself and Jeff, in which we dig into some of the details of his experience and his advice on supporting what we in the localization space usually refer to as “long-tail languages,” which I hope will be helpful to others.

Can you briefly describe your background in localization and how you ended up working in your current role?

I’ve been working on the client side (and a little vendor side) of the language industry for over a decade. I’ve been everything from a freelance translator and court interpreter to leading localization at Mozilla. Through them all, projects with a-typical specifications that require a special approach to localization have always attracted me, and Mozilla is full of those.

How do you define “long-tail language?” What does online population size have to do with it?

Long-tail languages are those for which revenue acquisition represents a long-term investment strategy. They are more risky and complex than your typical EFIGS (English, French, Italian, German, Spanish) line-up, but they play a key role in speaking to the hearts and minds of people in specific regions.

Online population size is what makes these languages more risky. Because they skew toward the lower end of the scale, the total online buying power of those consumers is lower as well. However, as that population size grows, these languages will become the next competitive ground for companies looking to enter emerging markets or focus on micro-markets.

Can you share some of the strategies you’ve used to address long-tail language needs?

For Mozilla, these strategies start at our mission to secure the Internet as a global, public resource. As a prominent open source project with strong brand recognition, Mozilla attracts language activists and open source enthusiasts from around the globe whose mission is to spread software in their language. Remaining open to contribution has been critical to enabling these communities to thrive.

Another element to this is equity. Global accessibility aims to emphasize the equability in the software development process. Solutions that we’ve created for localization have revolved around being as equitable as possible, dedicating the same design principles and intention to all languages.

Finally, we’ve invested a lot in personal relationships with members of these long-tail communities in order to ally ourselves alongside them in their cause. These personal relationships have resulted in creating spaces where these community members feel confident in approaching us, describing their needs, and collaborating with us to solve for them. Being closely aligned with these communities helps us to empathize with their struggles, see and understand their needs, and identify ways in which we can support them.

Can you talk about how to ensure quality for these languages?

Quality standards for these languages have to depend on flexibility. Each language is at a different milestone along the path toward full digitization and standardization. As such, some will have official orthographies and neologistic processes and individuals will be trained on them. For these, many of the standard quality management practices are still relevant, it’s more a question of ensuring access to enough qualified eyes.

Other languages currently don’t enjoy the benefits of standardized resources and are still in a more nascent state along the path toward digitization. In addition to the lack of standardized resources, they will have limited representation in Unicode, and the criteria for defining a “qualified translator” will vary. In absence of these resources, translators will have to rely on corporate style guides and terminology for consistency, and work toward the ability to rely on translation memory for sentence-level consistency.

Mozilla has gone so far as to build its own community translation management system (TMS): Pontoon. What is the state of technology for these languages? Are there challenges due to lack of support from MT and CAT tools, termbase software?

The state of technology varies for the same reason quality standards and management vary: their evolution along the path of full digitization and standardization. It all depends on the availability of locale-specific data. CAT tools and termbase software rely on availability of locale data from Unicode. That data is often baked into the software, so if the language has date/time formatting, character sets, and plural rules defined in Unicode, that support will likely be in these tools too.

MT and voice technology add more layers to the “data challenge” pile. The data challenges can fit into three categories: absence of data sets, unstructured data sets, and licensing constraints. While machine learning algorithms can, by and large, adapt to the languages they’re introduced to, the issue is around having open access to large enough, fit and annotated data for those algorithms to work with. Organizations like SYSTRAN and TAUS are working to solve for some of these through data and neural model brokerage, but it will take time to see how well these data set marketplaces solve the problems these languages face.

What has surprised you the most about working with long-tail languages?

What I’ve observed is that one of the primary differences for localization into FIGS or other commonly targeted locales and long-tail is that creativity is applied in so many unique ways. For German, there will almost always be UI text area constraints. For many long-tail languages, however, in addition to these constraints, they often don’t enjoy the convenience of having a target language equivalency readily available. I’ve been very surprised by these translators’ creative ability to coin new terms out of technical concepts through neologisms. It’s a fascinating process to be a part of and observe.

Which long-tail language has been the most difficult?

I can’t speak to any one specific language, but I will say that the language communities we work with that lack standardization support are often the most challenging. We either have to custom define plural rules, or wait until they can be defined upstream in Unicode. We could definitely be doing more to bridge the locale data gap by upstreaming communities to Unicode (or representing their communities to Unicode), but there’s also a technical barrier to entry for many of them. The work that Translation Commons is doing to define a digitization roadmap and help these communities along that path can open a lot of doors and reduce the complexity of pursuing localization into many of these languages.

Any fun anecdotes that will go down in your “favorite moments”?

It’s not my story, but we sent one of our Localization Program Managers to Paraguay to support the Mozilla Aguaratata (Firefox in Guaraní) community and the launch of Firefox desktop and Firefox for Android in the language. She wasn’t aware of it until she landed and was picked up at the airport, but she had been booked to appear on Paraguayan TV to talk about the localization launch and the time of the interview was scheduled for within an hour or so after she landed. Fortunately, she’s fluent in Spanish and could participate in the interview, but she had just ended a 12-hour journey to get to Paraguay and was very suddenly thrust into the literal spotlight!

What would you advise to someone developing a strategy for long-tail languages?

I have three pieces of advice: First, define your purpose for developing a long-tail language strategy. Because any anticipated return will take more time than what you saw for Spanish or Japanese, you will need a purpose-driven strategy to sustain the effort in the long-term. Second, work with single-language vendors or locals directly. The cultures and languages you’ll be working with will likely be starting from a more unique place than the cultures and languages you’re used to, and will encounter challenges that only specialists deeply embedded in the culture will be able to overcome. And finally, be transparent and collaborative. One of the biggest challenges for these languages is the lack of available resources and data. Find ways to be open to sharing the data you create and partnering with organizations that are aligned with your strategic purpose so that the tide can raise all ships.

What can companies do now to ensure scalability and support for long-tail languages later, even if they might not be addressing them for several years?

I strongly believe that companies with strong cultural intelligence make strong products. Investing in diversity and inclusion initiatives and hiring people that possess a high level of cultural intelligence and empathy can set the groundwork for the purpose-driven strategy you’ll need to sustain your long-tail language support efforts.